BME researchers in the Calcium Signals and Neural Encoding Laboratories at Johns Hopkins have mapped the sound-processing part of the mouse brain (the auditory cortex) in a way that keeps both the proverbial forest and the trees in view. Their imaging technique allows for zooming in and out on views of brain activity within individual mice, whereas previous techniques often lock into one scale of view or the other. In this way, the Hopkins team could readily find and then zoom in to watch certain brain cells that light up as mice “call ” to each other, a step toward better understanding of how our own brains process language. The results appear as the cover and lead article in the July 31 issue of the journal Neuron in the article entitled “Multiscale Optical Ca2+ Imaging of Tonal Organization in Mouse Auditory Cortex.”

In the past, researchers often studied sound processing in the brain by blindly poking tiny electrodes into the auditory cortex, playing tones to observe the response of nearby neurons, and then laboriously repeating the process over a grid-like pattern to infer neuron location within an overall landscape. Intriguingly, neurons seemed to be spatially laid out in neatly organized tone bands, whose favorite frequency changed smoothly across cortex. More recently, a technique called two-photon microscopy has allowed researchers to focus in on minute regions of live mouse brains and observe in considerable detail all the neurons within the zoomed-in sectors. Paradoxically, this latter approach suggests that the well-manicured arrangement of bands might be an illusion seen by averaging behavior over many neighboring neurons. This orderly layout appears to become fractured when neurons in close quarters are actually monitored individually by two-photon microscopy. But, says David Yue, MD, PhD and Eric Young, PhD, both professors of biomedical engineering and neuroscience at the Johns Hopkins University School of Medicine involved in the current study, “You could lose your way within the zoomed-in views afforded by two-photon microscopy and not know exactly where you are in the brain.”

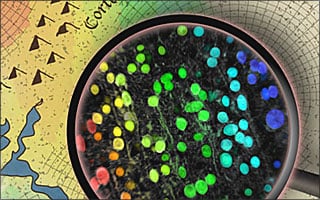

To get a bigger and better picture, John Issa, a graduate student in Yue’s lab, used a mouse genetically engineered to produce a molecule that glows green in the presence of calcium. Since neurons elevate this calcium when they become active, neurons in the auditory cortex glow green when activated by various sounds. Remarkably, Issa discovered that he could initially peer through the skulls of mice to observe the auditory cortex emitting green flashes when sounds were played, allowing a global map to be readily resolved for a given mouse. Subsequently, two-photon microscopy furnished a zoomed-in view of individual neurons in the same mouse, but in this case, the location of neurons was precisely known relative to the global map. As Issa puts it, “With these mice, we were able to both monitor the activity of individual populations of neurons, and ‘zoom out’ to see how those populations fit into a larger organizational picture.”

With these advances, Issa, fellow PhD graduate student Ben Haeffele, and the rest of the research team were indeed able to resolve the tidy tone bands of more traditional studies. More importantly, the new imaging platform quickly revealed more sophisticated properties of auditory cortex, particularly as mice listened to the chirps they use to communicate with each other. “Understanding how sound representation is organized in the brain is ultimately very important for better treating hearing deficits,” Yue says. “We hope that mouse experiments like this can provide a basis for figuring out how our own brains process language and, eventually, how to help people with cochlear implants and similar interventions hear better.” But, says Yue, “You could lose your way within the zoomed-in views afforded by two-photon microscopy, and not know exactly where you are in the brain.” Yue led the current study along with Eric Young, Ph.D., also a professor of biomedical engineering and neuroscience in Johns Hopkins’ Institute for Basic Biomedical Research.