Computer vision algorithms show promise for improving the accuracy of colonoscopy screenings, a procedure that uses a camera to see the inside of the large intestine. To train and assess these algorithms, researchers must use a properly labeled dataset that contains “ground truth,” or information that is verified to be true in reality. So far, such datasets have been difficult to acquire for colonoscopy images.

A Johns Hopkins University research team has created a dataset of simulated videos, called C3VD, that is more representative of colonoscopy imaging and can help researchers evaluate how their computer vision models will perform in real-world scenarios. Published recently in Medical Image Analysis, the research addresses the major issues that make colonoscopy a challenging application for computer vision, including 3D reconstruction and depth estimation.

Ultimately, the team hopes the new open-source dataset will enable the wider research community to develop better computer vision tools for colonoscopy applications.

Research Highlights

- C3VD is the first colonoscopy reconstruction dataset that includes realistic colonoscopy videos labeled with registered ground truth.

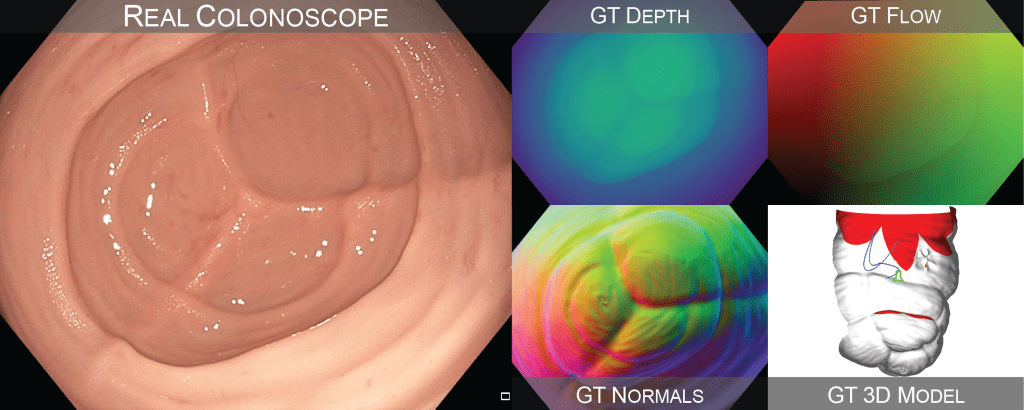

- C3VD is recorded entirely with an HD clinical colonoscope and includes depth, surface normal, occlusion, and optical flow frame labels.

- The project is a joint effort between Hopkins experts in optics and computer science, medical art, and gastroenterology.

- Future directions, according to the researchers, include using the dataset to evaluate advanced computer vision algorithms that can assist endoscopists in real time during colonoscopy.

Research Overview

To develop the dataset, researchers from the Department of Art as Applied to Medicine first created a digital sculpture of the colon from reference anatomical images; the model was then physically cast in silicone. Using the 3D colon model, the research team recorded video sequences with a clinical HD colonoscope to include realistic lighting, sensor gain, gamma, and noise—all factors that can challenge the performance of computer vision algorithms.

Once they recorded the simulated colonoscopy videos, the researchers needed to virtually align the videos to the models so that they could extract ground truth information for each frame. They developed a registration algorithm using a combination of artificial intelligence, ray tracing, and optimization. By registering the videos with the models, they created a video dataset with more than 10,000 frames, where each frame has pixel-level registered ground truth depth, normals, optical flow, occlusion, coverage, and poses.

“These are essential components for many computer vision algorithms, making our dataset useful for various potential applications that may improve colonoscopy quality,” said Taylor Bobrow, a graduate student in biomedical engineering and lead author of the paper.

While the primary contribution of their study was the validation dataset, Bobrow says the team also made an interesting finding related to the registration algorithm. Most work on 2D-to-3D registration has been focused on applications in radiological systems via X-rays, whereas their team works with optical technologies. The team discovered that transforming optical images to the depth domain for registration resulted in significant improvements in registration accuracy, as compared to directly registering the optical images.

Broader Impacts

A potential future application for the dataset is to minimize missed lesions during screening colonoscopies. Missed lesions are a major contributor to post-colonoscopy colorectal cancer; researchers are trying to solve this problem by developing real-time feedback systems that alert the endoscopist if they do not inspect a region of tissue during the procedure. And because these unobserved regions could be hiding lesions, a big focus is on creating algorithms that help endoscopists achieve complete visualization of the colon, said Bobrow. The researchers say their dataset can be used as a tool for validating algorithms that flag such missed regions.

Meet the Research Team:

- Taylor L. Bobrow, Graduate Student, Biomedical Engineering

- Mayank Golhar, Graduate Student, Biomedical Engineering

- Rohan Vijayan, Graduate Student, Biomedical Engineering

- Nicholas J. Durr, Associate Professor, Biomedical Engineering

- Venkata S. Akshintala, Assistant Professor, Gastroenterology

- Juan R. Garcia, Associate Professor, Art as Applied to Medicine